Vern Paxson got over the program Tools lex task from Jef Poskanzer in 1982. At that point it was written in Ratfor. Around 1987 roughly, Paxson translated it into C, and flex was created.

I. COMPILER

A compiler is a program that translates individual readable source code into computer executable machine code. To get this done successfully the real human readable code must comply with the syntax rules of whichever program writing language it is written in. The compiler is merely a program and cannot fix your programs for you. If you make a blunder, you have to improve the syntax or it won't compile.

The name "compiler" is primarily used for programs that translate source code from a high-level program writing language to a lower level dialect (e. g. , assemblage language or machine code). When the compiled program can only just run on a computer whose CPU or operating-system is different from the one which the compiler works the compiler is actually a cross-compiler. A program that translates from a low level language to an increased level the first is a decompiler. A program that translates between high-level dialects is usually called a terms translator, source to source translator, or terms converter. A vocabulary rewriter is usually a program that translates the form of expressions without a change of dialect.

When the code is compiling following thing happens:-

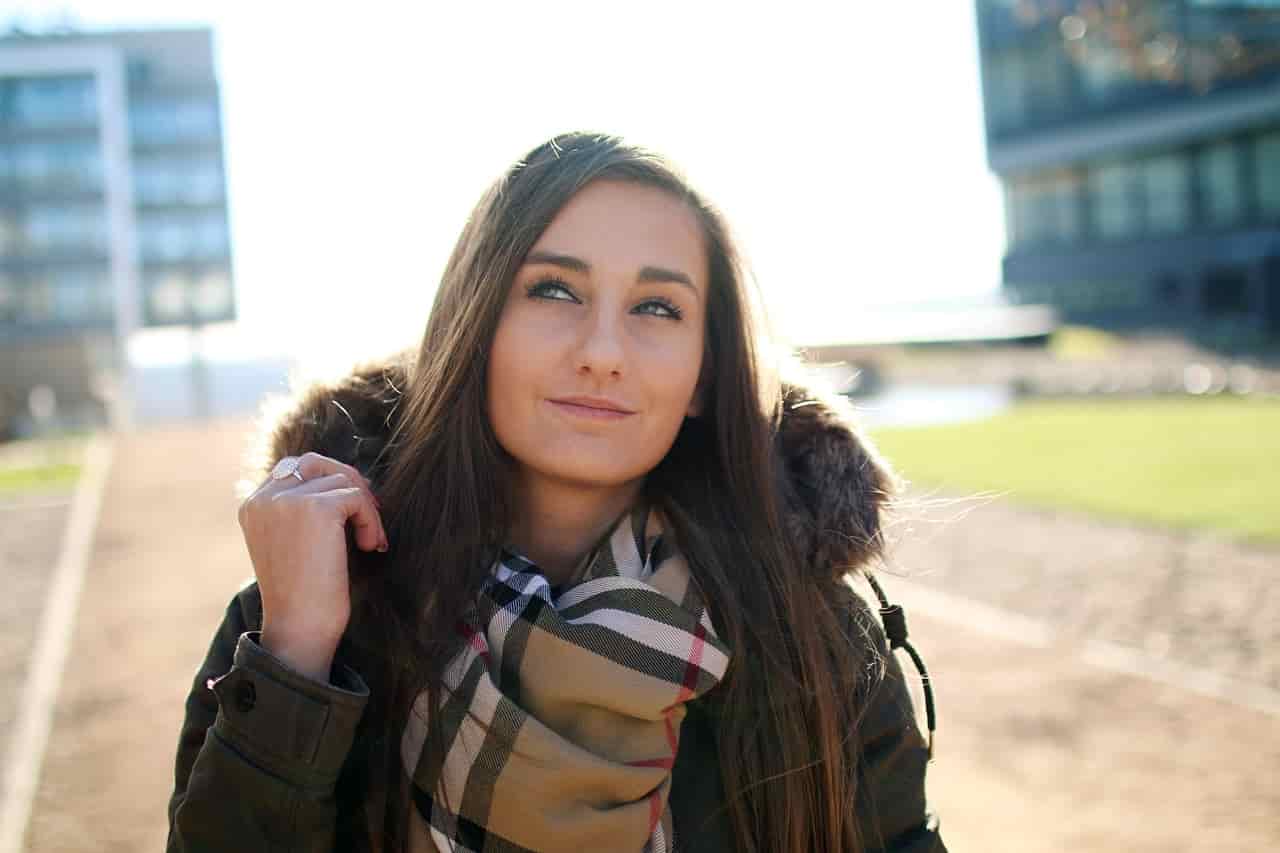

II. Lexical Analysis

This is the first process where in fact the compiler reads a blast of individuals (usually from a source code data file) and produces a blast of lexical tokens. For example, the C++ code

int C= (A*B)+10;

might be analysed as these tokens:

type "int"

variable "C"

equals

left bracket

variable "A"

times

variable "B"

right bracket

plus

literal "10"

Lexical evaluation or scanning is the process where the blast of characters creating the foundation program is read from left-to-right and grouped into tokens. Tokens are sequences of individuals with a collective so this means. There are usually only a small variety of tokens for a programming language: constants (integer, two times, char, string, etc. ), operators (arithmetic, relational, reasonable), punctuation, and reserved words.

The lexical analyzer takes a source program as input, and produces a stream of tokens as end result. The lexical analyzer might identify particular instances of tokens such as:

3 or 255 for an integer constant token

"Fred" or "Wilma" for a string regular token

numTickets or queue for a variable token

Such specific situations are called lexemes. A lexeme is the genuine character sequence creating a token; the token is the overall class that a lexeme belongs to. Some tokens have exactly one lexeme (e. g. , the > identity); for others, there a wide range of lexemes (e. g. , integer constants).

fig 1. Lexical analyser

III. SYNTACTICAL ANALYSIS

This end result from Lexical Analyzer would go to the Syntactical Analyzer area of the compiler. This uses the guidelines of grammar to decide whether the source is valid or not. Unless parameters A and B had been previously declared and were in range, the compiler might say

'A': undeclared identifier. Got they been announced however, not initialized. The compiler would concern a alert.

Local changing 'A' used without been initialized. You should never disregard compiler warnings. They can break your code in odd and unpredicted ways.

Always fix compiler warnings.

The purpose of syntactic research is to determine the composition of the source text. This composition involves a hierarchy of phrases, the smallest of which will be the basic symbols and the greatest of which is the sentences. It can be described by a tree with one node for every phrase. Basic symbols are symbolized by leaf nodes and other phrases by interior nodes. The root of the tree represents the word.

This paper clarifies how use a `. con' specification to describe the group of all possible phrases that may appear in phrases of a dialect. It also discusses methods of resolving ambiguity in such descriptions, and how to carry out arbitrary actions during the acceptance process itself. The usage of `. perr' specifications to enhance the error restoration of the generated parser is described as well.

Computations based on the type can be written with feature grammar specs that are based on an abstract syntax. The abstract syntax explains the structure of abstract syntax tree, much what sort of concrete syntax details the phrase structure of the type. Eli uses a tool, called Maptool that automatically creates the abstract syntax tree based on an evaluation of the concrete and abstract syntaxes and end user technical specs given in data files of type `. map'. This manual will describe the rules utilized by Maptool to find out a unique correspondence between the cement and abstract syntax and the information users provides in `. map' data to assist along the way.

IV. Making Machine Code

Assuming that the compiler has efficiently completed these stages:

Lexical Research.

Syntactical Examination.

The final stage is creating machine code. This can be an extremely complicated process, especially with modern CPUs. The velocity of the put together executable should be as fast as possible and can vary enormously matching to

The quality of the made code.

How much search engine optimization has been wanted?

Most compilers enable you to specify the amount of optimization. Typically nothing for debugging (quicker compiles!) and full marketing for the released code.

v. Flex and lexical analysis

From the area of compilers, we get a host of tools to convert words documents into programs. The first part of that process is categorised as lexical analysis, particularly for such languages as C. A good tool for creating lexical analyzers is flex. It takes a specification record and creates an analyzer, usually called lex. yy. c.

Flex: The Fast Lexical Analyzer

It is a tool for producing programs that perform pattern-matching on content material. Flex is a free of charge (but non-GNU) implementation of the original UNIX lex program.

Flex is an instrument for making scanners. A scanner, sometimes called a tokenizer, is an application which recognizes lexical habits in text message. The flex program reads user-specified input data, or its standard type if no document names are given, for a description of a scanner to create. The description is by means of pairs of regular expressions and C code, called rules. Flex generates a C source file called, "lex. yy. c", which defines the function yylex (). The data file "lex. yy. c" can be compiled and associated with produce an executable. When the executable is run, it analyzes its type for occurrences of wording matching the regular expressions for every single guideline. Whenever it discovers a match, it executes the equivalent C code.

Flex fast lexical analyzer generator:

Is linked with its collection (libfl. a) using -lfl as a compile-time option.

Can be called as yylex ().

It is not hard to interface with bison/yacc.

l document Ж ! lex ! Ж lex. yy. c

lex. yy. c and other data Ж ! gcc!Ж lexical analyzer

input streamЖ ! lexical analyzer !Ж activities taken when rules applied

A. Simple Example

First some simple cases to obtain the flavour of how one uses flex. The next flex source specifies a scanning device which whenever it encounters the string "username" will replace it with the user's login name:

%%

username printf( "%s", getlogin() );

By default, any words not matched with a flex scanner is copied to the productivity, so the world wide web effect of this scanning device is to copy its input file to its end result with each occurrence of "username" widened. In this input, there is just one rule. "username" is the style and the "printf" is the action. The "%%" marks the beginning of the guidelines.

B. Flex Regular Expression

s string s literally

\c persona c practically, where c would normally be considered a lex operator

[s] personality class

^ indicates starting of line

[^s] individuals not in character class

[s-t] selection of characters

s? s occurs zero or one time

. any personality except newline

s* zero or more occurrences of s

s+ one or more occurrences of s

r|s r or s

(s) grouping

$ end of line

s/r s iff accompanied by r (not recommended) (r is *NOT* used)

sm, n m through n occurrences of s

C. Flex type file format

The flex type file involves three sections, separated by a series with just `%%' in it:

definitions

%%

rules

%%

user code

Simple Example

%%

username printf( "%s", getlogin() );

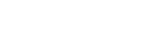

VI. WORKING OF FLEX

Flex is a program generator that produces source code for knowing regular expressions when given structure specifications for source. The specs allow an action to be associated with each type routine. A Flex-produced DFA (deterministic finite automaton) functions the acceptance of regular expressions. Flex can offer effectively with ambiguous expressions by always choosing the longest matching string in the insight stream.

Lex transforms the user's input table of regular expressions and actions into a function called yylex(). The yylex() function, when included into your source host-language program, does each action as the associated structure is acknowledged. Flex is capable of producing its output as C, C++, or FORTRAN source code. In any case, the yylex() function includes the highly effective string matching routines of Aho and Corasick (Communications of the ACM, No. 18, 1975).

The yylex() function made by Lex will generally require time proportional to the distance of the insight stream. This function is linear with respect to the input and in addition to the number of rules. As the quantity and difficulty of rules boosts, yylex() will tend to upsurge in size only. Speed will have to decrease when the type rules require intensive forward scanning of insight.

fig 2. Working of flex

A. Flex Actions

Actions are C source fragments. If it's compound, or needs more than one range, enclose with braces ('' '').

Example guidelines:

[a-z]+ printf ("found word\n");

[A-Z][a-z]*

printf ("found capitalized phrase:\n");

printf (" '%s'\n", yytext);

There are a number of special directives that can be included within an action:

ECHO

Copies yytext to the scanner's productivity.

BEGIN

Followed by the name of a start condition places the scanner in the corresponding start condition (see below).

REJECT

Directs the scanner to proceed to the "second best" rule which matched the insight (or a prefix of the insight). The rule is chosen as identified above in Matching, and yytext and set up appropriately. It may either be one that matched all the word as the at first chosen guideline but arrived later in the flex insight file, or one that matched less text message. For example, the next will both count what in the insight and call the regular special() whenever frob is seen:

int expression_count = 0;

%%

frob special(); REJECT;

[^ \t\n]+ ++word_count;

B. Flex Definitions

The form is merely:

name definition

The name is just a word beginning with a letter (or an underscore, but I don't recommend those for general use) accompanied by zero or more words, underscore, or dash.

The classification actually runs from the first non-whitespace persona to the finish of line. You may make reference to it via name, which will broaden to (definition). (cite: this is largely from "man flex". )

Tattoueba:

DIGIT [0-9]

Now if you have a rule that appears like

DIGIT*\. DIGIT+

that is the same as writing

([0-9])*\. ([0-9])+

VII. GENERATED SCANNER

The outcome of flex is the file lex. yy. c, which provides the scanning tedious yylex(), lots of tables utilized by it for coordinating tokens, and a number of auxiliary regimens and macros. By default, yylex() is announced the following:

int yylex()

. . . various explanations and the activities in here. . .

(If the environment helps function prototypes, then it'll be "int yylex( void )". ) This meaning may be changed by determining the "YY_DECL" macro. For example, you could use:

#define YY_DECL float lexscan (a, b) float a, b;

to supply the scanning schedule the name lexscan, going back a float, and taking two floats as arguments. Note that if you give arguments to the scanning regime utilizing a K&R-style/non-prototyped function declaration, you must terminate this is with a semi-colon (;).

Whenever yylex() is named, it scans tokens from the global suggestions record yyin (which defaults to stdin). It carries on until it either extends to an end-of-file (at which point it profits the worthiness 0) or one of its actions executes a go back statement.

If the scanner grows to an end-of-file, succeeding cell phone calls are undefined unless either yyin is directed at a new input record (in which particular case scanning remains from that file), or yyrestart() is named. yyrestart() will take one discussion, a Data file * pointer (which may be nil, if you've create YY_Insight to check from a source apart from yyin), and initializes yyin for scanning from that data file. Essentially there is absolutely no difference between just assigning yyin to a new input file or using yyrestart() to take action; the second option is designed for compatibility with previous versions of flex, and because it can be used to switch input files in the center of scanning. It can be used to throw away the current type buffer, by dialling it with an argument of yyin; but better is to use YY_Get rid of_BUFFER (see above). Remember that yyrestart() does not reset the beginning condition to Original.

If yylex() puts a stop to scanning anticipated to performing a return statement in another of the actions, the scanner may then be called again and it will resume checking where it remaining off.

By default (and for purposes of efficiency), the scanning device uses block-reads somewhat than simple getc() calling to read characters from yyin. The type of how it gets its insight can be handled by defining the YY_Suggestions macro. YY_INPUT's phoning collection is "YY_Suggestions(buf, result, max_size)". Its action is to place up to max_size individuals in the type array buf and come back in the integer variable result either the number of character types read or the constant YY_NULL (0 on Unix systems) to point EOF. The default YY_Source reads from the global file-pointer "yyin".

A sample definition of YY_Source (in the definitions portion of the input document):

%

#define YY_Suggestions (buf, result, max_size) \

\

int c = getchar(); \

result = (c == EOF) ? YY_NULL : (buf[0] = c, 1); \

%

This definition changes the input control that occurs one character at the same time.

When the scanner receives an end-of-file indication from YY_Source, it then checks the yywrap() function. If yywrap() profits phony (zero), then it is assumed that the function has truly gone ahead and setup yyin to indicate another input document, and scanning continues. If it profits true (non-zero), then your scanner terminates, returning 0 to its caller. Remember that in either case, the start condition remains unchanged; it does not revert to Primary.

If you don't supply your own version of yywrap (), then you must either use %option noyywrap (in which particular case the scanning device behaves as though yywrap () delivered 1), or you must link with -ll to get the default version of the regime, which always results 1.

Three routines are for sale to checking from in-memory buffers rather than data: yy_scan_string(), yy_scan_bytes(), and yy_scan_buffer().

The scanner writes its ECHO end result to the yyout global (default, stdout), which may be redefined by an individual simply by assigning it to some other Record pointer.

VIII. OPTIONS

-b Ж Generate backing-up information to lex. back up. That is a list of scanner claims which require burning and the input characters on which they do so. By adding rules one can remove backing-up states. If all backing-up claims are taken out and -Cf or -CF is used, the generated scanner will run faster (see the -p flag). Only users who want to squeeze every last cycle out with their scanners need worry relating to this option. (See the section on Performance Concerns below. )

-c Ж is a do-nothing, deprecated option included for POSIX compliance.

-f Ж specifies fast scanner. No table compression is performed and stdio is bypassed. The result is large but fast. This option is equivalent to -Cfr.

-h Ж generates a "help" summation of flex's options to stdout and then exits. -? and --help are synonyms for -h.

-n Ж is another do-nothing, deprecated option included only for POSIX compliance.

-b Ж instructs flex to create a batch scanner, the opposite of interactive scanners made by -I (see below). Generally, you use -B if you are certain that your scanner will never be utilized interactively, and also you want to squash a bit more performance from it. If your goal is instead to press out a lot more performance, you should be using the -Cf or -CF options (talked about below), which start -B automatically in any case.

IX. LEX

In computer knowledge, lex is an application that generates lexical analyzers ("scanners" or "lexers"). Lex is often used with the yacc parser generator. Lex, originally compiled by Mike Lesk and Eric Schmidt, is the typical lexical analyzer generator on many Unix systems, and an instrument exhibiting its behavior is specified within the POSIX standard.

Lex reads an suggestions stream specifying the lexical analyzer and outputs source code employing the lexer in the C program writing language.

Though typically proprietary software, variations of Lex based on the original AT&T code are available as open up source, as part of systems such as OpenSolaris and Plan 9 from Bell Labs. Another popular wide open source version of Lex is Flex, the "fast lexical analyzer".

X. STRUCTURE OF LEX

The structure of your lex record is intentionally similar to that of any yacc file; documents are divided up into three portions, separated by lines that contain only two percent signs or symptoms, the following:

Definition section

%%

Rules section

%%

C code section

The classification section is the spot to define macros and also to import header files written in C. Additionally it is possible to create any C code here, which is copied verbatim into the generated source record.

The guidelines section is the most important section; it affiliates patterns with C assertions. Patterns are simply just regular expressions. Once the lexer perceives some wording in the source matching a given structure, it executes the associated C code. This is actually the basis of how lex operates.

The C code section contains C claims and functions that are copied verbatim to the made source record. These statements presumably contain code called by the guidelines in the rules section. In large programs it is more convenient to put this code in another file and link it in at compile time.

XI. Advantages TO TOKENS AND LEXEMS

Suppose you're not only reading documents but reading (and perhaps interpreting) a scripting dialect insight file, such as Perl or VB source code. Lexical evaluation is the lowest level translation activity. The purpose of a lexical analyzer or scanner is to convert an inbound stream of heroes into an outgoing blast of tokens. The scanning device operates by corresponding patterns of character types into lexemes. Each style describes what an instance of a specific token must match. For example, a common style for an identifier (for example, user-specified changing or constant) in a script vocabulary is a notice followed by one or more occurrences of your letter or digit. Some lexemes that could match this pattern are index, sum, and i47.

Things that your type stream defines as worthless, such as white space and comments, aren't lexemes and can be safely and securely discarded by the scanning device. Several classes of tokens are found in the meanings of most script dialects.

TABLE 1

Typical Tokens

Keywords

Reserved words (such as technique and return) that can't be redefined

Operators

Typically strings (1-3 heroes) such as /, >=, and >>= used in expressions

=Identifiers

User-specified objects just like keywords in form

Numbers

Integer, real, or double-precision as specified

Character constants

Single individuals such as c or \0

Character strings

Zero or even more characters stored in a different way than identity constants

EOLN and EOF

Logical end-of-line and end-of-input markers

XII. Lexical evaluation terms

A token is a group of heroes having collective meaning.

A lexeme is an actual character series forming specific case of the token, such as num.

A structure is a guideline expressed as a normal expression and describing how a particular token can be created.

For example, [A-Za-z][A-Za-z_0-9]* is a rule.

Characters between tokens are called whitespace; included in these are spots, tabs, newlines, and form feeds. Many people also count remarks as whitespace, though since some tools such as lint/splint look at comments, this conflation is not perfect.

Attributes for tokens

Tokens can have features that can be passed back again to the calling function.

Constants would have the value of the constant, for case.

Identifiers may have a pointer to a location where information is retained about the identifier.

XIII. CONCLUSION

Flex generates C99 function explanations by default. However flex does have the ability to generate obsolete, er, traditional, function explanations. This is to support bootstrapping gcc on old systems. Alas, traditional meanings prevent us from using any standard data types smaller than int (such as short, char, or bool) as function quarrels. Because of this, future types of flex may create standard C99 code only, departing K&R-style functions to the historians.